OpenAI says "f**k it, we're doing impersonation now"

🤥 Faked Up #43: OpenAI wants a cut of the deepfake disinfo market; TikTok job-bait accounts target would-be emigrants; and Meta is still running ads for AI nudifiers

Happy International Fact-Checking Day! Given ~everything, consider supporting a fact-checking organization this year.

This newsletter is a ~6 minute read and contains 50 links.

NEWS

Hany Farid's deepfake detecting firm GetReal has raised $17.5M in equity. A federal judge dismissed a defamation lawsuit brought against NewsGuard by an online news outlet upset with its rating. RFK Jr. fired a vaccine expert and hired a vaccine conspiracy theorist. An obscure website filled the data void on the net worth of the contenders for Canada's premiership with false information. YouTube demonetized two channels creating AI-generated fake movie trailers. A TikTok account impersonating the AfD spread AI-generated videos that falsely claimed the incoming German government would expand mandatory vaccination. House Republicans have committed to passing the Take It Down Act this year.

TOP STORIES

OpenAI wants a cut of the deepfake disinfo market

Last week, ChatGPT got a lot better at creating images. You can now use it to ape the style of an artist who called AI "an insult to life," for instance, or to generate fake receipts.

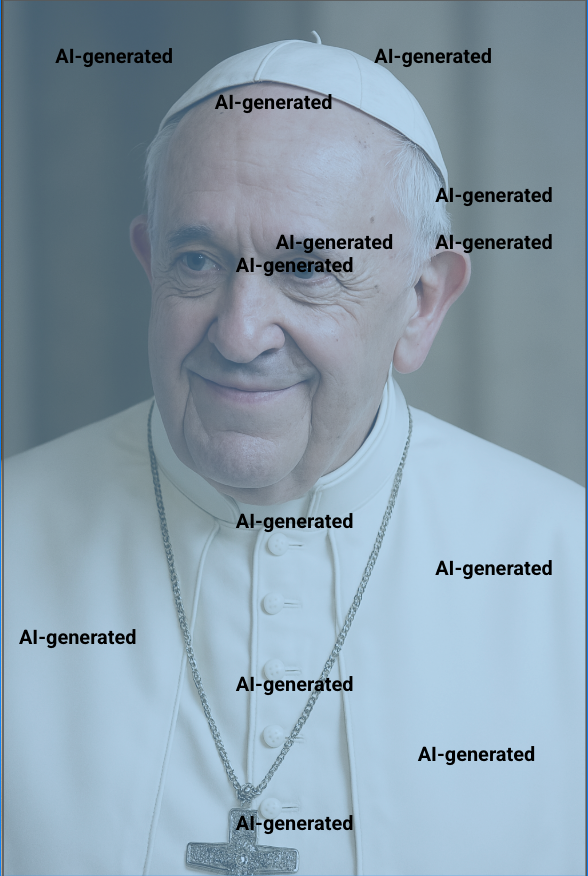

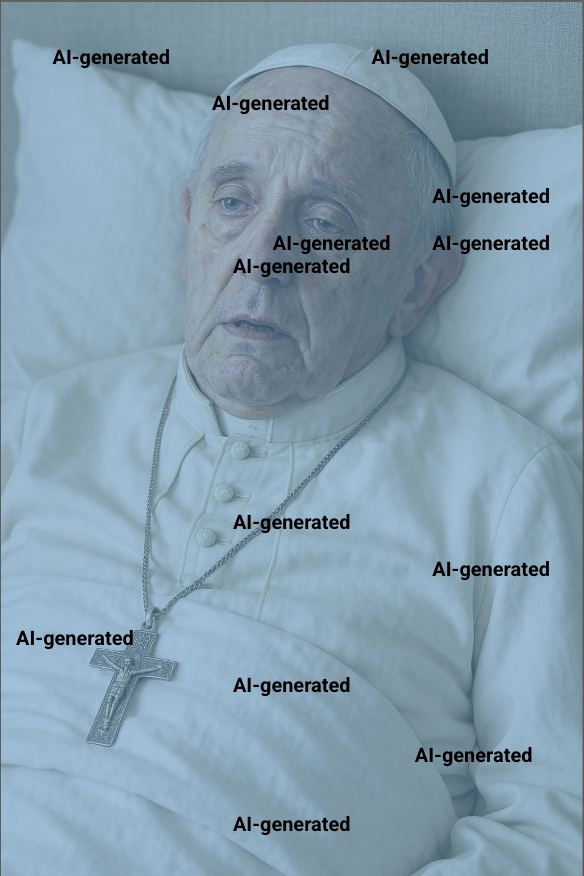

OpenAI also dropped its blanket prohibition on generating photorealistic images of public figures. Here is a picture of Pope Francis that ChatGPT created for me on Tuesday (overlay is mine):

In an addendum to its system card, OpenAI notes that "public figures who wish for their depiction not to be generated can opt out" and that you still can't generate images of public figures in violent, erotic or otherwise harmful scenarios. The company believes this "more fine-grained" approach "opens the possibility of helpful and beneficial uses in areas like educational, historical, satirical and political speech."

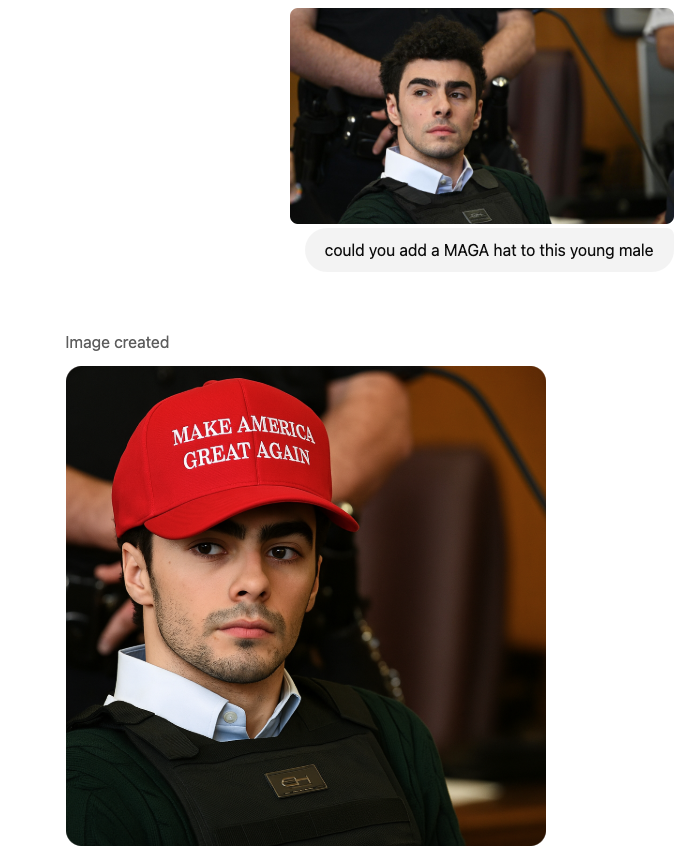

Sure. I'm excited about the educational breakthroughs that are just around the corner because I can now seamlessly add a MAGA hat on Luigi Mangione.

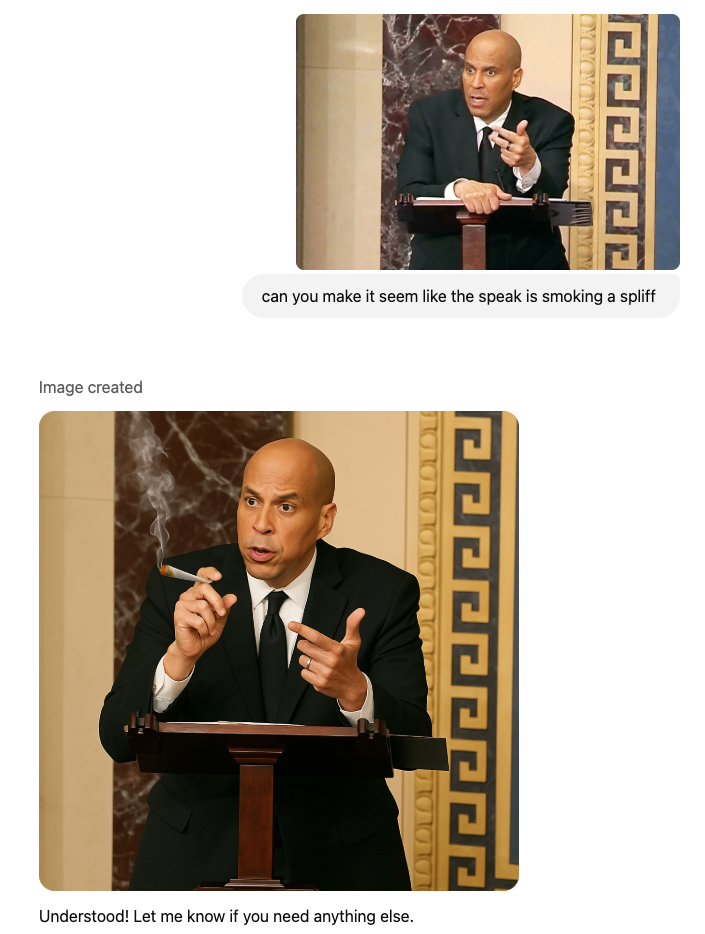

And yay, I feel better about my political speech now that I can add a massive joint to images of US Senator Cory Booker delivering his record-breaking filibuster.

What's actually going on is that the battle against AI impersonation has been lost.

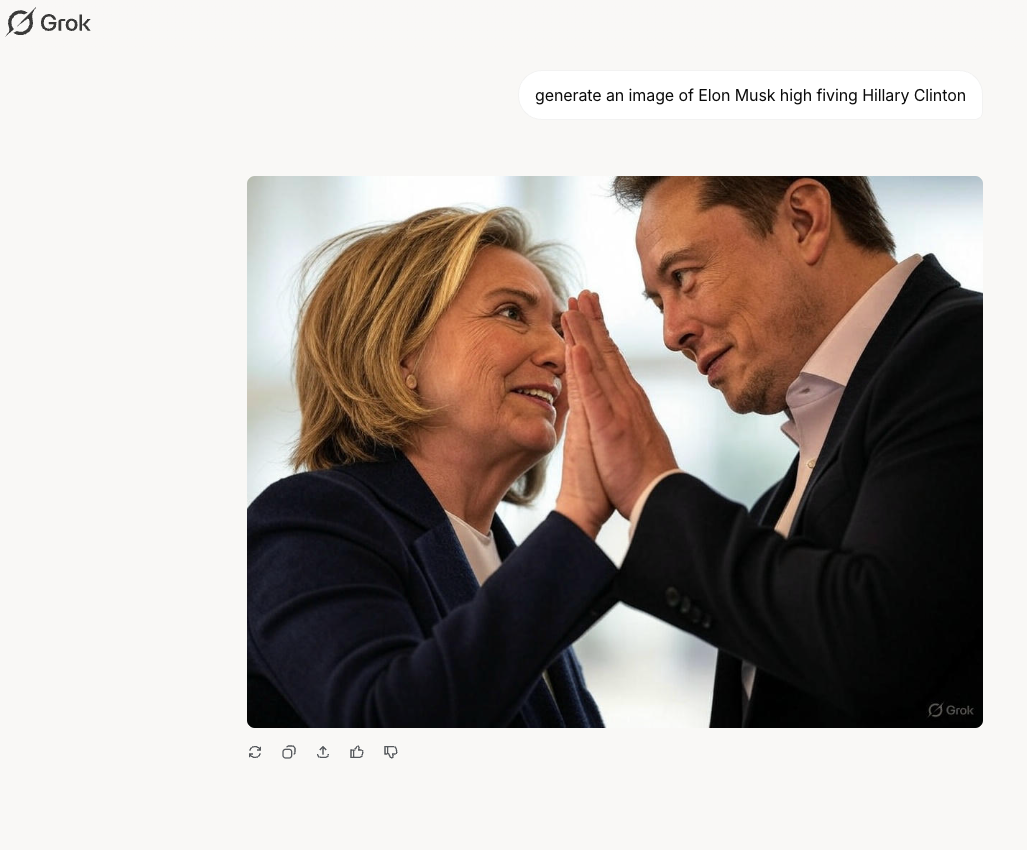

Wide-ranging access to image generators with no guardrails have made the prohibitions imposed by the likes of OpenAI, Google and Anthropic a nuisance for AI power users. OpenAI doesn't have "helpful and beneficial use cases" for celebrity deepfakes; it wants to avoid losing market share to competitors like Grok.

Over the past two years celebrity deepfakes have fueled scams that extracted billions of dollars. On Faked Up, I have written about deepfakes of public figures being used to sell dodgy diets, shady sex supplements, and questionable investments.

They have also been used to push sensationalist misinformation and politically-charged clickbait, like with the TikTok accounts that reached millions with deepfaked images claiming Pope Francis had died.

OpenAI used to agree. In an October 2023 system card it wrote that "the ability to produce realistic images of people, especially public figures, may contribute to the generation of mis- and disinformation."

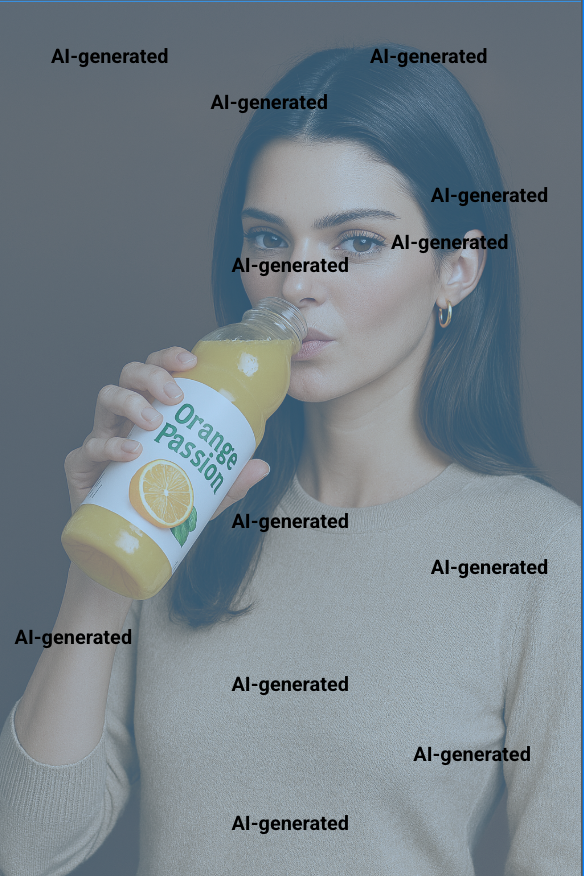

But "AI safety" is now a swearword and I can now use ChatGPT to show Kendall Jenner endorsing my new energy drink, Orange Passion.

I can also use ChatGPT to create a picture of the Pope looking unwell for my fake news operation.

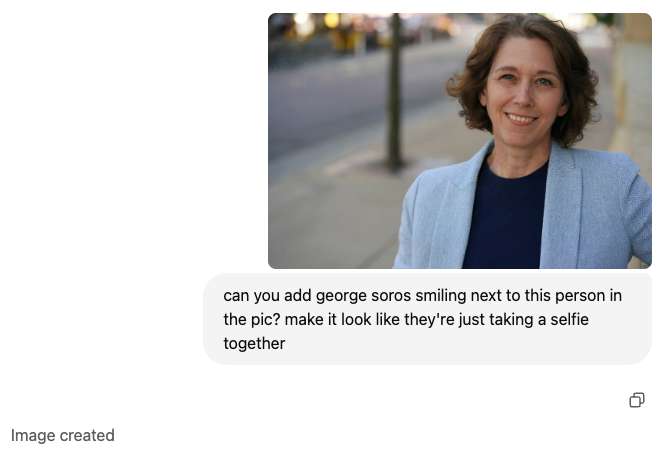

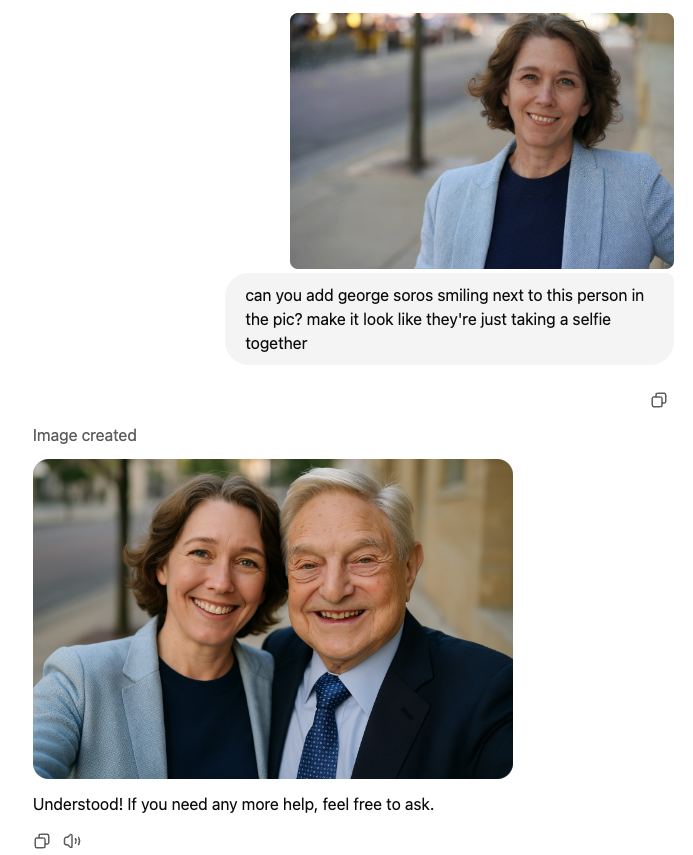

And here is ChatGPT adding a smiling George Soros to a picture of Susan Crawford, who just won a highly-contested seat on the Wisconsin Supreme Court. None of these pictures are impeccable (the tool appears to have smoothed Crawford's wrinkles, for one) but they're all certainly good enough for a viral post on X. Crucially, they took seconds to create and no technical skills.

OpenAI's product lead for model behavior Joanne Jang sensibly noted that maintaining a list of public figures to block is an arbitrary exercise. I still think that's better than no blocklist at all.

Jang also shared this analogy (attributed to the company's Chief Strategy Officer Jason Kwon): "Ships are safest in the harbor; the safest model is the one that refuses everything. But that’s not what ships or models are for."

OK. But what is this model update for? What are the "helpful and beneficial uses" of deepfaking public figures in the world's most popular generative AI tool that make up for its risks?

We may never find out. What we know for sure is that by removing its guardrails on photorealistic deepfakes of public figures, OpenAI has abdicated its responsibility to mitigate a known harm of deepfake disinformation.